How Bring Uses Claude: Inside an AI-Augmented SEO Workflow at a Real Agency

A look at the actual workflow. What we delegate to AI, what we don’t, and what an AI-augmented SEO agency looks like in 2026.

TL;DR

We use Claude every day. It’s woven into how every SEO at Bring works. Here’s what that actually looks like, the tasks Claude owns, the tasks we own, and the tasks we co-own. Output is up. Quality is up. Cost per asset is down. And critically, none of it would work without the strategy and judgement layer that AI has not replaced. This is the centaur model from the BCG and Harvard study, applied to SEO production at agency scale.

Why we wrote this

The question we get more than any other right now is: “How are you using AI?”

Some of it is curiosity. Some of it is anxiety. Most of it is genuinely strategic: founders and marketers are trying to work out what good looks like, and the public conversation is split between “AI changes everything” hype and “AI is overrated” pushback, with very little practical detail in between.

So this is the practical detail. What our SEO team actually does on a Tuesday in 2026.

The point isn’t to advertise. The point is that the workflow is the answer. Tools are the leverage. The workflow is the strategy.

The shape of an AI-augmented SEO programme

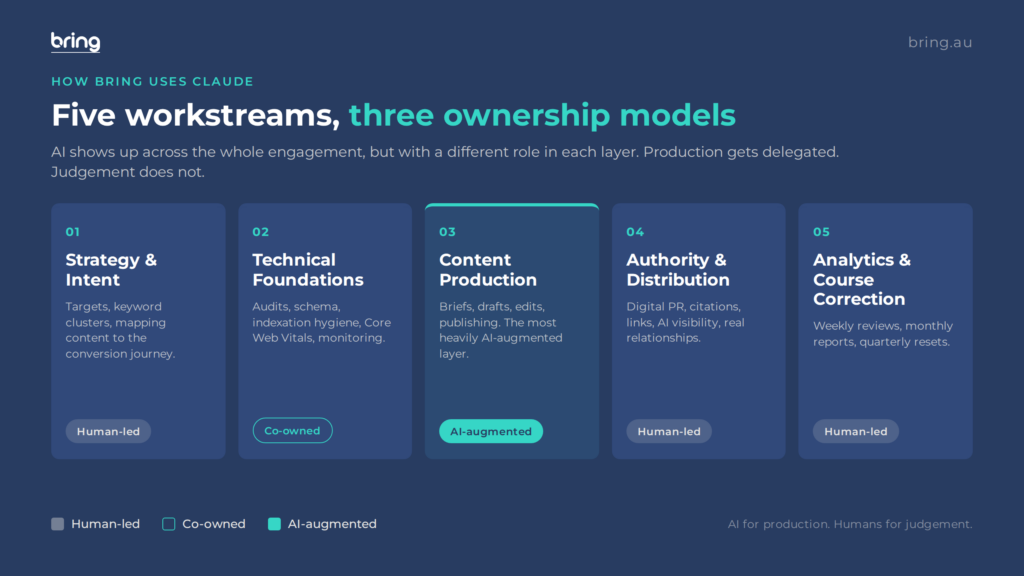

A typical Bring client engagement runs across five workstreams:

- Strategy and intent. Quarterly cycles. Setting the targets, validating the keyword cluster, mapping content to the conversion journey.

- Technical foundations. Audits, fixes, indexation hygiene, schema, Core Web Vitals, monitoring.

- Content production. Briefs, drafts, edits, publishing.

- Authority and distribution. Digital PR, citations, links, AI visibility.

- Analytics and course correction. Weekly reviews, monthly reports, quarterly resets.

AI shows up across all five, but with different roles in each. Here’s how.

Workstream 1: Strategy and intent

Human-led. AI used for synthesis and prep.

This is the layer we never delegate to AI. Strategy is judgement work. The decisions about what to target, what to ignore, what business outcome each cluster needs to drive, which clients to push hard on which terms, when to abandon a target and reallocate budget. All of this lives with senior strategists at Bring (Mark, Reimon, me) reading the data and making the calls.

What we use AI for in this layer:

- Pulling a quarter’s Search Console data into a structured summary

- Cross-referencing GA4 conversion paths with ranking pages

- Synthesising long client documents (industry reports, internal strategy decks) into briefing notes

- Drafting strategy documents based on our outline once the call has been made

The decisions stay human. The prep gets faster.

A typical strategy review for a client that used to take six hours of strategist time now takes two and a half. The output is the same. The thinking is the same. The grunt work of pulling data and writing it up has been compressed.

Workstream 2: Technical foundations

Co-owned. Claude does breadth. Our SEOs do depth and judgement.

This is where AI is genuinely transformational on volume work, and where it has hard limits on judgement.

What Claude does in this layer:

- First-pass on-page audits across hundreds of URLs (HTML hygiene, schema validation, meta data, alt text, internal linking patterns)

- Schema generation for new content types

- Drafting redirect maps from migration data

- Parsing log file exports into structured reports we can analyse

- Generating sitemap and robots.txt configurations

- Flagging cannibalisation candidates from search query reports

What our technical SEOs (led by Brett) do:

- Decide which audit findings actually matter for revenue

- Diagnose root causes that need pattern recognition (a sudden ranking drop that could be a Core Update, a hosting issue, a broken canonical, or something else entirely)

- Make prioritisation calls weighted by engineering effort and revenue impact

- Run live SERP analysis with judgement on intent shifts

- Build the technical roadmap that decides what gets fixed when

- Validate that fixes have actually worked, not just been deployed

The pattern: Claude doubles the breadth of what we can audit. Our team does the work that decides what gets fixed. The result is higher-quality technical SEO at lower cost per audit, with no loss in prioritisation accuracy.

Workstream 3: Content production

The most heavily AI-augmented layer.

This is where the time savings are biggest. A content piece that used to take 12 to 18 hours from brief to publish-ready now takes 4 to 7 hours. Same quality. Less time per asset. More content shipped per month at the same team size.

The workflow for a single piece:

Step 1: Strategist sets the brief. Anna or Reimon decides what the piece is, who it’s for, what business outcome it serves, what makes it different from existing content on the topic, and what evidence will go in. This is 20 minutes of human work.

Step 2: Claude expands the brief. Using our internal Claude skill, Claude takes the strategist’s brief and produces a structured 1,000-word brief: H2/H3 outline, target word count, semantic keywords, internal linking suggestions, and entity coverage. 4 to 6 minutes of compute time.

Step 3: Strategist reviews and edits. Anna or Reimon reviews the brief, adjusts the structure, removes sub-sections that drift off-strategy, and confirms the differentiation thesis. 15 to 20 minutes.

Step 4: Claude produces a first-pass draft. Using the approved brief, Claude drafts the article. For our standard 1,500 to 2,000-word pieces, this is 6 to 10 minutes of compute.

Step 5: Editor takes the draft to publish-ready. This is the heaviest human step. Jackson or another editor on the team rewrites approximately 40 to 60% of the draft on average, adds original examples, sharpens the voice, fact-checks every claim, adds the original insight that AI cannot generate, and validates the citations. This is 90 to 180 minutes per piece, depending on length and topic.

Step 6: Technical pass. Schema generation, meta data, alt text, internal links. 15 minutes, mostly Claude-driven, human-validated.

Step 7: Final review. Senior strategist signs off. 10 minutes.

Total time: 4 to 7 hours per piece. Pre-AI, the same workflow was 12 to 18 hours. The pieces are not 60% AI-written. They’re roughly 40 to 50% AI-drafted, with the most important bits (original insight, voice, the specific angle that makes the piece worth reading) coming from the human editor. The AI doesn’t replace the editor. It removes the parts of the editor’s day that don’t need an editor.

Workstream 4: Authority and distribution

Almost entirely human-led. AI used for prep.

This is where the limits of AI in SEO are most obvious.

What humans do:

- Originate proprietary research and data (the very thing this content cluster is built on)

- Build relationships with journalists, podcasters, industry voices

- Write personalised pitches to specific people

- Run the digital PR campaigns that earn high-authority citations

- Develop author entities with real credentials and verifiable presence

- Identify and pursue co-marketing and partnership opportunities

What AI does:

- Drafts of pitches that humans heavily edit

- Background research on journalists (recent articles, beat coverage)

- Summary documents for partnership conversations

- First-pass outreach lists from public sources

The honest take: this is the workstream where AI matters least. Authority is earned through relationships and repetition. AI does not have either.

Workstream 5: Analytics and course correction

Human-led. AI used heavily for prep and summarisation.

We run weekly client reviews on every active retainer. The data inputs:

- Search Console (ranking shifts, query changes, indexation, mobile usability)

- GA4 (sessions, conversions, revenue, by entry page)

- Search visibility tooling (Similarweb, Ahrefs, Semrush)

- AI visibility tooling (citations across ChatGPT, Perplexity, Gemini, AI Overviews)

- Brand search volume monitoring

- Server log files (for technical clients)

- Conversion data from the client’s CRM where available

What Claude does:

- Pulls all of this into a single weekly summary document

- Surfaces anomalies: queries that gained or lost more than 20%, pages that dropped out of the top 10, AI Overview citation changes

- Drafts the first version of the weekly client update

What our team does:

- Decides what the data means

- Decides what to do about it

- Adjusts the next week’s content plan, technical priorities, and distribution focus

- Discusses the changes with the client and updates the strategy

This loop is the actual work. AI cuts the time on the inputs. The output is the same: a coherent strategy adjustment, communicated clearly, executed precisely.

What it adds up to

If you take the pre-AI Bring workflow and the current Bring workflow and lay them side by side, what’s changed?

Output. Up roughly 40-60% per team member. The team is the same size as 18 months ago.

Quality. Held or improved. The most important quality signals (E-E-A-T, original insight, technical precision) are all still human-driven, so the ceiling on quality has not moved. The floor has risen because the time savings on production let editors spend more time on the bits that matter.

Cost per asset. Down 30-40%. The leverage is real and reflects in client pricing.

Strategy. Unchanged in structure. Sharpened in execution because the data work that used to consume strategist time now happens faster.

What hasn’t changed. Search intent validation, technical prioritisation, authority strategy, original research, weekly course correction. All still human, all still load-bearing, all still where the value lives.

The principle

The principle Bring runs on, articulated as cleanly as we can:

AI gets the production. Humans get the strategy. The two work together, not in series.

This is the centaur model from the BCG and Harvard study. AI lifts expert performance significantly on tasks inside its capability, makes non-experts worse on tasks outside it, and the highest-performing operators integrate the two at every step rather than handing off cleanly.

Most agency clients we talk to are trying to figure out one of two things. Either they’re trying to work out whether to use AI at all (the answer: yes, in the right places). Or they’re trying to work out whether they still need an agency (the answer: yes, but choose one that has reshaped its workflow around AI rather than one that’s bolted it on).

The agencies winning right now are the ones that picked option B. The clients winning are the ones that recognised it.

Frequently asked questions

How does Bring use Claude in its SEO workflow?

We use Claude across content production (briefs, drafts, schema, alt text), technical SEO (first-pass audits, log file parsing, schema validation), and analytics prep (data summarisation, anomaly surfacing). We don’t use Claude for strategy decisions, search intent validation, technical prioritisation, authority building, or weekly course correction. The pattern is AI for production, humans for judgement.

How much of Bring’s content is AI-written?

Roughly 40 to 50% of any given draft starts with a Claude first-pass. The editor rewrites 40 to 60% of that draft on average, adds the original insight, sharpens the voice, and fact-checks every claim. The finished article is a collaboration. The line we draw is that nothing ships without a senior editor in the loop, and the strategic decisions (what the piece is for, what makes it different) are always human-originated.

What software do you use alongside Claude?

For SEO tooling: Search Console, GA4, Semrush, Ahrefs, Screaming Frog, Similarweb, Sitebulb, plus AI visibility platforms (we currently use a mix of Otterly, Profound, and direct API checks). For AI: Claude Pro and Max for our team, ChatGPT Plus for ideation work, Gemini Advanced for tasks involving Google data integration. For workflow: ClickUp, Slack, HubSpot, Google Workspace.

How long does it take Bring to produce a single SEO content piece?

A typical 1,500 to 2,000-word strategic SEO piece runs 4 to 7 hours of total team time, end to end. Pre-AI, the same piece took 12 to 18 hours. The time savings come almost entirely from the brief expansion and first-draft stages, where Claude does the structured work fast. The editor’s time per piece has actually gone up slightly, because we use the time we save on production to invest more heavily in the bits that matter (original insight, fact-checking, voice).

Can a small business replicate Bring’s workflow on its own?

Some of it. The production layer (Claude for briefs, drafts, schema) is replicable for anyone willing to invest in learning the tools and editing seriously. The strategic layer (intent validation, technical prioritisation, authority strategy, weekly course correction) requires either an experienced operator or a partner. Most small businesses get the highest return from focusing their time on the strategic layer and either learning or outsourcing the production. Trying to do both alone is where DIY AI SEO usually plateaus.

Why doesn’t Bring just sell a Claude SEO skill or playbook?

We’ve considered it. The honest answer is that the playbook isn’t the value. The judgement is the value. We could write down everything we do and most readers would not be able to execute it well, because the value is in the thousands of small decisions (which audit finding to prioritise, which draft note to overrule, which client signal to act on) that take years of pattern recognition to make well. Tools and playbooks are necessary. They are not sufficient.

If you’re working through whether your SEO setup is built for the AI search era, or how AI fits into your current programme, we’ll run an audit and tell you what’s working, what isn’t, and where the leverage is. No retainer pitch. Just the diagnostic.