TL;DR

On 15 May 2026, Google Search Central published an official guide to optimising for generative AI search features (AI Overviews and AI Mode). The headline position: “AEO” (answer engine optimisation) and “GEO” (generative engine optimisation) are still SEO from Google’s perspective. The guide explicitly calls out four widely-promoted AI optimisation tactics as unnecessary for Google Search: llms.txt files, content chunking, rewriting content specifically for AI systems, and overfocusing on structured data. It also confirms the mechanics: AI Overviews and AI Mode use retrieval-augmented generation (RAG) grounded in Google’s existing Search index, plus a technique called query fan-out that runs concurrent related queries. The thing Google did not say: any of this applies to ChatGPT, Perplexity, Claude, or any other AI search system. Those systems retrieve content differently and the AEO/GEO tactics Google dismissed for Google Search can still matter for the rest of the AI search landscape. The practical answer: ship good content with clear structure, ignore most of the “AI-specific hacks,” and pay attention to the agentic experiences section, which is where the next 12 months of work actually sits.

What Google actually said

The new guide is short by Google’s documentation standards (roughly 2,000 words) and lands a clear position. Three things matter most.

One: AEO and GEO are SEO. Google’s exact phrasing: “From Google Search’s perspective, optimising for generative AI search is optimising for the search experience, and thus still SEO.” This is the most important sentence in the document. It tells the industry that Google does not see AEO or GEO as separate disciplines requiring separate tactics, separate budgets, or separate consultants. If you have been pitched a six-figure “GEO retainer” lately (or are weighing up running your own AI-augmented SEO), this sentence is the one to forward to your finance team.

Two: Google confirmed the mechanics. AI Overviews and AI Mode run on retrieval-augmented generation, which Google also calls “grounding.” The model retrieves pages from Google’s existing Search index using the same ranking systems that surface ordinary search results, then synthesises an answer with citations. On top of that sits query fan-out, where the model generates several related queries concurrently. A user asking “how to fix a lawn full of weeds” might trigger fan-out queries like “best herbicides for lawns,” “remove weeds without chemicals,” and “how to prevent weeds in lawn.” Each of those fan-out queries pulls more pages, which inform the synthesised answer. The implication: any page that ranks for any related query in your category has a chance of being cited in an AI answer. Topical coverage matters more than ever. Hyper-specific landing-page-per-keyword strategies still don’t.

Three: the four myths. Google explicitly listed four tactics it considers unnecessary or actively unhelpful for Google Search:

- llms.txt files and similar “AI-specific” markup

- Content chunking

- Rewriting content specifically for AI systems

- Chasing inauthentic mentions

Each of these has been the foundation of an AEO consulting pitch in the last twelve months. Each is now officially redundant for Google Search. Worth walking through them properly.

The four “myths” and what’s actually right about each

Myth 1: llms.txt and AI-specific markup

What Google said: You don’t need to create new machine-readable files, AI text files, or markup for Google to surface your content in AI Overviews or AI Mode. Standard HTML is enough.

Our read: Google is right on the narrow point. If you were planning to add llms.txt because someone on LinkedIn told you it would unlock AI visibility, save the engineering hours. There is no evidence it does anything for Google Search.

Where this gets nuanced: Some non-Google AI systems do read llms.txt when it’s present, and it’s a near-zero-cost file to publish. We are not telling clients to remove llms.txt files they already have. We’re telling clients not to expect commercial uplift from publishing one, and not to delay other work to build one. There’s a long tail of “marketing tactics that don’t hurt and might help a bit” and llms.txt is sitting comfortably in it.

Myth 2: Content chunking

What Google said: “There’s no requirement to break your content into tiny pieces for AI to better understand it. Google systems are able to understand the nuance of multiple topics on a page and show the relevant piece to users.”

Our read: This is the line that most of the AEO consulting industry will be defending hardest this week. The honest answer is that Google is correct that you don’t need to literally chunk your content into 400-word blocks separated by horizontal rules. Google’s retrieval system is sophisticated enough to extract relevant passages from longer content.

Where this gets nuanced: “You don’t need to chunk for Google’s retrieval system” is not the same as “page structure doesn’t matter.” The reason chunking advice took hold is that well-structured content with clear headings, self-contained sections, and answer-first paragraphs gets cited more often by multiple AI systems. Not because of chunking specifically. Because clear structure is good writing. ChatGPT, Perplexity, and Claude.ai (which retrieve from their own search providers, not Google’s index) all reward this kind of structure as well. The recommendation stands. The framing changes. Structure for clarity, not for “chunking.”

Myth 3: Rewriting content specifically for AI

What Google said: “AI systems can understand synonyms and general meanings of what someone is seeking, in order to connect them with content that might not use the same precise words. This means you don’t have to worry that you don’t have enough ‘long-tail’ keywords or haven’t captured every variation of how someone might seek content like yours.”

Our read: Strongly agree. Writing the same page seventeen times with different keyword variations was already on the wrong side of Google’s helpful content updates. Now it’s officially on the wrong side of Google’s AI search guidance too. If your content strategy currently runs on “spin a thousand variations of every commercial keyword,” kill it.

Where this gets nuanced: There is a real difference between writing the same page seventeen times (bad) and answering the actual range of questions your prospects ask (good). Topical coverage still matters. The test: does this new piece of content add a unique perspective or expand the topic, or is it just a keyword variant of a page you already published? If it’s the latter, don’t write it.

Myth 4: Inauthentic mentions

What Google said: “Seeking inauthentic ‘mentions’ across the web isn’t as helpful as it might seem.” Google’s core ranking systems focus on quality content while other systems block spam, and the AI features depend on both.

Our read: Also strongly agree. The PR-link-spam industry was already on borrowed time. AI search citations are pulled from the same web Google is already ranking, which means low-quality forum drops and sponsored “expert mentions” carry the same risk in AI Overviews as they do in regular search.

Where this gets nuanced: Authentic earned mentions in industry listicles, comparison content, and category review pieces still drive AI citations. Around a third of AI citations come from comparative listicle content. The difference between authentic and inauthentic isn’t whether it’s a “mention.” It’s whether the publication is real, the writer is real, and the inclusion was earned on merit. Digital PR done properly still works. Digital PR done as cheap link insertion does not.

The bit Google chose not to address

This is where the strategic reading gets interesting.

The Google guide speaks only for Google Search. The phrase “Google Search” or “Google” appears 38 times in the document. There is no mention of ChatGPT, no mention of Perplexity, no mention of Claude, no mention of Microsoft Copilot, no mention of Meta AI, no mention of any system Google does not own.

This matters because the AI search landscape is now genuinely multi-platform. ChatGPT alone has approximately 800 million weekly active users worldwide. Perplexity is now embedded in a major mobile operating system. Claude has its own consumer surface. None of these systems pull from Google’s index. Most retrieve through their own search partners (typically Bing, in ChatGPT and Copilot’s case) or their own indexing infrastructure.

The guide is not wrong. It is narrowly scoped. The hacks Google dismissed are dismissed for Google Search. They may still have some effect on other systems. The structural recommendations Google encouraged (helpful content, clear technical structure, original perspective, real experience) work everywhere because they’re good writing principles.

The practical translation: if you optimise for Google Search the way Google now says to optimise, you will do well in Google Search. You will probably do well in ChatGPT and Perplexity too, because the underlying principles overlap. But you should not assume Google’s guide is a complete optimisation strategy for the AI search landscape as a whole. It is a complete optimisation strategy for Google’s slice of it.

The genuinely new bit: agentic experiences and UCP

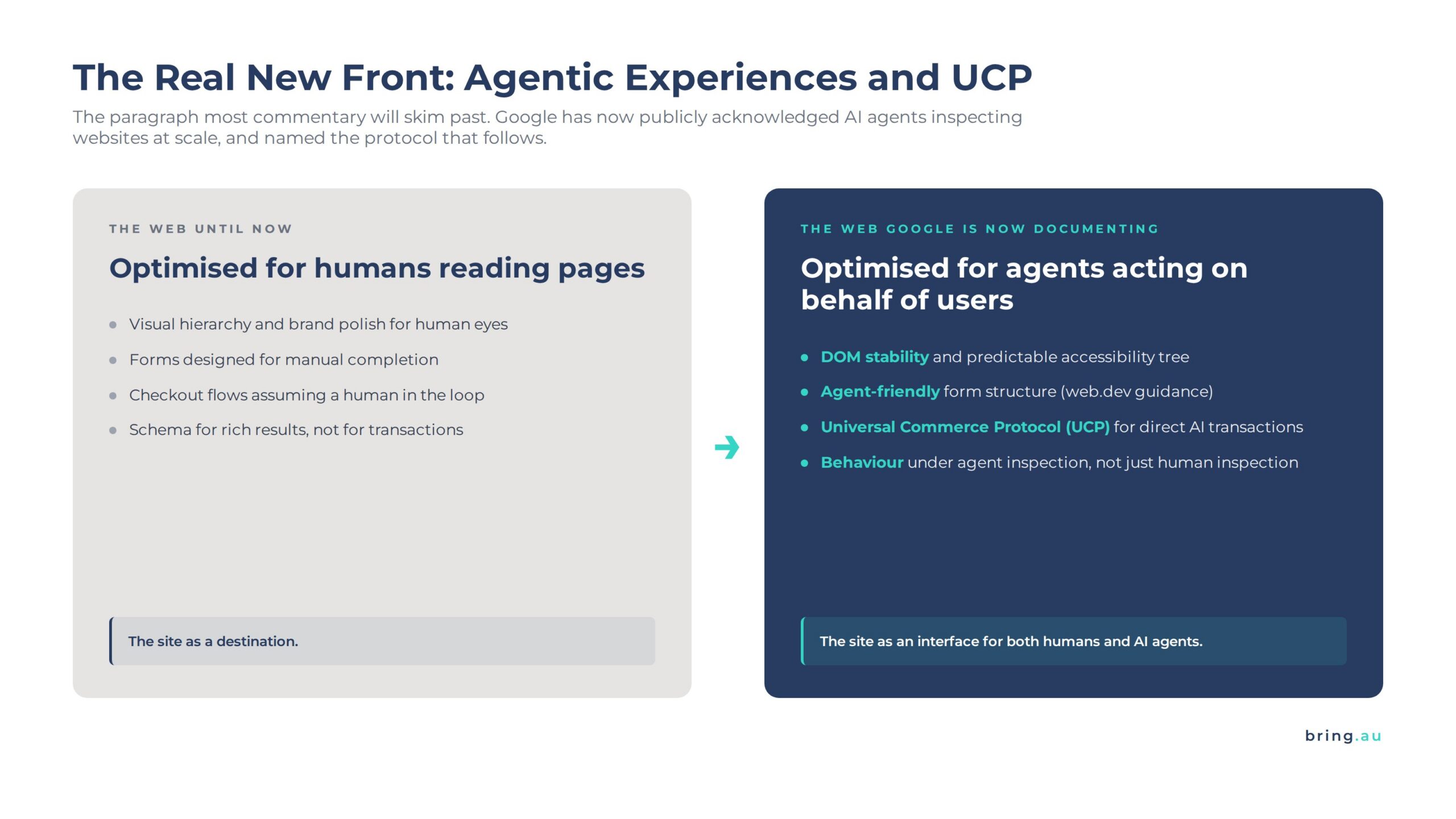

The most interesting paragraph in the guide is one most commentary will skim past. Google introduced a section called “Explore agentic experiences” and named Universal Commerce Protocol (UCP) as an emerging protocol allowing search agents to do more.

This is significant for two reasons.

First, Google has now publicly acknowledged that browser-based AI agents are accessing websites at scale, performing tasks (price comparisons, reservations, product spec lookups) by inspecting the DOM, the accessibility tree, and visual renderings. The guide links to web.dev’s agent-friendly best practices document. The implication: how your site behaves under agent inspection (not just human inspection) is now a thing Google is documenting.

Second, the UCP mention is the first time Google has named a specific cross-vendor protocol for AI commerce. UCP is the emerging standard for letting AI agents transact directly with merchant systems. If you sell anything online, this is the protocol that determines whether an AI agent can complete a purchase on your site or has to send the user back to do it manually. Early movers in UCP-compatible infrastructure will see materially better AI commerce performance over the next 18 months.

If you only do one thing differently after reading Google’s guide, make it this: brief your dev team on UCP, audit your site’s behaviour under agentic inspection (accessibility tree, DOM stability, rendering predictability), and put a planning task on the roadmap for the next quarter.

What this means for your strategy

The practical implications, ranked by commercial impact:

One: keep doing foundational SEO well. Helpful, reliable, people-first content. Clear technical structure. Real expertise on the page — the kind humans contribute, not AI alone. Original perspective. If you got this right under Google’s helpful content updates, you are already most of the way there.

Two: stop paying for AEO and GEO consulting that promises “AI-specific” tactics. Google has now publicly framed those tactics as unnecessary for Google Search. If a vendor pitches you llms.txt installation, content chunking refactors, or “AI rewrites” of your existing content as their core deliverable, ask them what they think of the 15 May Google guide.

Three: invest in original perspective. Google’s emphasis on first-hand experience and unique viewpoints is significant. AI systems can synthesise commodity content trivially. They cannot synthesise actual expert experience that gets cited by AI engines. Whatever you know that nobody else has written down is now your single biggest content advantage.

Four: pay attention to agentic experiences and UCP. This is the genuinely new front. The companies that will benefit most are the ones treating their site as something an AI agent has to navigate, not just something a human reads.

Five: track AI referrals separately. Google’s guide does not address measurement directly, but every business we work with is now monitoring AI traffic (ChatGPT, Perplexity, Claude, Copilot) as a separate channel in GA4. AI traffic converts at a materially higher rate than ordinary organic. Tracking it lets you justify investment in the right places.

Where Bring sits on this

Our position on AEO and GEO has been consistent since these terms started circulating: they are useful shorthand for the work, not separate disciplines. Google has now publicly agreed.

The work itself hasn’t changed. Helpful content with real expertise. Clear technical structure. Original perspective. Earned authority. The labels people put on it (SEO, AEO, GEO, AIO) are mostly marketing terminology for the same underlying craft.

What has changed is the consulting market. There is now an officially-sanctioned response to vendors selling exotic AI optimisation tactics: forward them the Google guide. We expect the AEO and GEO consulting market to consolidate sharply in the next 12 months, with the operators who confused customers with bespoke methodology losing ground to operators who simply do excellent SEO and integrate AI search measurement properly.

If you want to know how this changes anything specific in your current SEO programme, we offer a free 30-minute read of your current content strategy against the Google guide and the wider AI search landscape. No pitch follow-up unless you ask for one.

Frequently asked questions

Is AEO dead?

No. The label is increasingly redundant for Google Search, per Google’s official position. The work it describes (helping content surface in AI answers) is still important. It is now better understood as one outcome of doing SEO well, rather than a separate discipline.

Should I remove llms.txt from my site?

No. There’s no commercial benefit to publishing one for Google Search, but no harm in keeping an existing one if it’s already there. Some non-Google AI systems do parse llms.txt when present. Treat it as a near-zero-cost optional file.

Do I still need structured data for AI search?

Not strictly. Google’s guide says structured data isn’t required for generative AI search. We still recommend it because it materially improves rich result eligibility, helps Google understand the entity relationships on your page, and is good SEO hygiene regardless of AI. Add it because it’s foundational, not because it’s an “AI hack.”

Does the guide apply to ChatGPT, Perplexity, and Claude?

No. Google’s guide speaks only for Google Search’s AI features (AI Overviews and AI Mode). Other AI systems retrieve content through their own infrastructure (typically Bing or proprietary indexes) and the optimisation principles overlap but are not identical.

What’s the single most important thing in the guide?

The agentic experiences section. Google has publicly acknowledged browser-based AI agents and named UCP as the emerging protocol for AI commerce. This is where the meaningful work for the next 12 months sits, and it’s the bit most commentary will overlook.

What should I do first after reading this?

Read the Google guide yourself (linked below). Audit your existing AI optimisation work against the four “myths” section. Brief your dev team on UCP. Make sure your AI referral traffic is being tracked separately in GA4.

Sources and further reading

- Google Search Central: Optimising your website for generative AI features on Google Search (15 May 2026)

- Google Search Central: Creating helpful, reliable, people-first content

- web.dev: Agent-friendly website best practices

- Universal Commerce Protocol documentation

Get a read on where you stand

Bring’s free 30-minute strategy review now includes a read of your current SEO and content programme against Google’s 15 May 2026 AI search guide. We’ll tell you where you’re already aligned, where you’re spending money on tactics Google has now publicly dismissed, and where the agentic experiences shift means you need to plan ahead. Book a slot here.